Taylor McDonnell and Tim McLain, Department of Mechanical Engineering

Introduction:

Commercial applications of unmanned aerial systems (UAS) are expected to grow

significantly in the coming years [1]. Applications of unmanned aerial vehicles in the

commercial market include agricultural and infrastructure monitoring, aerial

photography, package delivery, fire monitoring, wildlife tracking, and search and rescue

operations. One of the purposes of the AUVSI-SUAS

competition is to train undergraduates for the growing

UAS industry. In this competition a team’s UAS must

autonomously identify and locate several targets, which

are geometric shapes with a given shape, shape color,

letter, letter color, geolocation, and orientation. The

focus of this project is to develop the image processing

and geolocation software necessary to participate in this

competition.

Methodology:

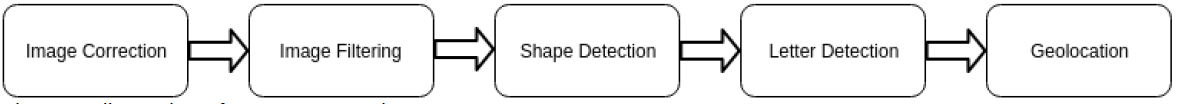

Our approach to autonomously identify and classify targets includes several phases. At

each phase additional information is bundled with the image and passed to the next

step for further processing. This framework is accomplished using a set of software

libraries and tools called ROS.

The first phase of our image processing is image correction. The geometric shapes

which represent the targets in this competition are simple 2D shapes, but when seen at

any angle other than straight down from above these shapes become distorted. The

image correction step removes this distortion. In this phase, the geolocated location of

each corner is used to transform the image to appear as if the UAS is directly over the

target.

After the image correction phase, the image is filtered to identify large contiguous

portions of the same color. These regions (or blobs) are identified as potential shapes

and the contours of these blobs are extracted to be analyzed.

In the shape detection phase, metrics of each blob, such as

the ratio of perimeter to area, are compared with metrics of

potential shapes [2]. When a blob’s metrics match a shape’s

metrics within a specified tolerance, the blob is identified as

that shape. If no shape is assigned, the blob is rejected as a

potential target.

Letter detection is performed using Tesseract, an open source optical character

recognition engine. Before using Tesseract, all parts of the blob are filtered except the

letter itself. The image is rotated and Tesseract is run at different angles to determine

both the character and the direction in which the target is oriented.

The last phase of image processing, geolocation, is performed using geometric relations

obtained from the UAS autopilot and gimbal [3].

Results:

Preliminary results for this project have shown good promise. Since switching to the

current color-based image filtering technique, filtering is quicker and more reliably finds

potential targets. Letter detection, after adding preprocessing, has a high success rate

of predicting the correct alphanumeric character. The shape detection method has

been shown to work, but has potential for improvement. The image correction and

geolocation phases require validation and will need to be tested.

Discussion:

The purpose of the AUVSI competition was to train students for the growing UAS

industry. This project has successfully done that. Several lessons have been learned

from participating in this project. A few are presented here:

- Leverage all accessible information. Our current and most effective blob

detection technique works because it is simple, customized to our individual

targets, and uses more available image information than other techniques. - Having an effective software organization method greatly aids systems

integration. Using ROS enabled us to have a parallel work-flow and allowed us

to try many different image processing techniques without having to change

many portions of the code. - While good for combining elements into the final software package, ROS was not

good for conceptual development. It is suggested that conceptual development

be done in a user friendly coding language/environment such as MATLAB before

implementing within the final software structure. - Testing is nontrivial. The image processing process presented here requires

information from the autopilot, gimbal, and camera in real time in order to work.

Thus test flights dedicated to image processing need to be carefully planned in

order to validate the developed code

Conclusion:

While our AUVSI team was not prepared to compete in the AUVSI-SUAS competition

this year, we developed a strong foundation for future teams to build upon. With proper

testing a debugging next year’s team should be able to represent BYU well at next

year’s competition.

Sources:

[1] Weissbach, Dyveke and Tebbe, Kathryn. Drones in sight: rapid growth through M&A’s in a soaring new

industry: Strategic Direction: Vol 32, No 6. (2016). Strategic Direction, 32(6), 37-39.

[2] Jorge, J. & Fonseca, M. (2000). A Simple Approach to Recognise Geometric Shapes Interactively.

Graphics Recognition Recent Advances, 266-274. http://dx.doi.org/10.1007/3-540-40953-x_23

[3] Barber, D., Redding, J., McLain, T., Beard, R., and Taylor, C. (2006). Vision-based Target Geo-location

Using a Fixed-wing Miniature Air Vehicle. Journal of Intelligent and Robotic Systems, 47(4) 361-382