Jared Shores and Dr. Joseph Price, Economics Department

I have always been drawn to the arts. Growing up I was continually playing and writing music. As I grew older my interested broadened into visual arts, specifically film. Although I am an economics major, I have continually looked for opportunities to apply what I was learning to the film industry. The idea for this project started after my Price Theory II class in the spring of 2009. My professor, Dr. Price, encouraged my desire to investigate why so few family friendly films were being created when it was arguably the largest film market. This simple conversation led to some initial investigation and then to a class research project. While seeking Dr. Price’s advice on data retrieval he encouraged me to apply for this grant and dig deeper.

The premise for my research is simple. I wanted to know if the Motion Picture Association of America (MPAA) rating system had any affect on the revenue a film could possible make. Although this topic has been lightly addressed in other research, it was not a main focus of any published paper but rather a small control that was accounted for.

The method of investigating a question like this requires large amounts of data. For this project we took five data sets and combined them together for a total sample size of over 2,000 movies. We had to combine these different data sets to account for as many variables as possible. These variables included information such as the release date, total box-office revenue, genre, budget, and rating of each film.

One of the most difficult parts of this project was a method called merging. For this project we used STATA, an analytical regression software, which is very particular with coding and combining elements. An example of this is can help illustrate the often tedious process that STATA can necessitate. Pretend that you three different data sets with information about a movie called “The Valley of Fun”. The one problem is that each data set has small discrepancy in the title, one is “The Valley of Fun” the next is “Valley of Fun, The” and the last is “The_Valley_of_Fun”. Without the exact coding, telling STATA how to normalize the movie title, the data will not combine and therefore be of no worth. This process was a constant trial and error process that required a lot of help from Dr. Price and his research assistants.

With the data we were able to accumulate we ran several different types of regressions, trying to better understand what role MPAA ratings really had on box-office revenues. To further diversify our project we decided to also investigate how different content drives ratings. If we are able to show how MPAA ratings affect a film’s revenue while also discovering what drives MPAA ratings, we could then tell what film content affects film revenues the most. This information would be extremely valuable to any movie studio or producer.

To do this we gathered data from three different alternative film rating websites. These sites are dove.org, screenit.com, and kidsinmind.com. All three sites rate each movie and give greater detail on exactly what content is contained in each film including counts of specific profanities. These sites also numerically rate each film in different content areas such as profanity, sexuality, drug use, violence, and gore. By taking this data from each site we create a score for every film. With this score we able to rerate each movie outside of the MPAA rating. We called this rating a film’s latent score.

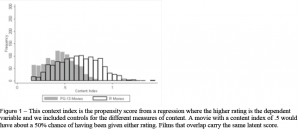

By using this latent score and other variables we collected, we were able to find movies that matched on every variable except MPAA rating. What this allows us to do is find two movies that are identical for all controllable reasons. By allowing the only difference to be the MPAA rating we were able to isolate the effect that it has on a film’s revenue. Figure 1 is a graphical representation of this overlap in latent scores of PG-13 and R rated films. In essence this shows PG-13 and R rated films that have the same content in them.

After running several different types of linear regressions we found that R rated films will do around 30 percent worse in box office revenues than its PG-13 counterpart. We were unable to find any statisitcal difference between PG-13, PG, and G rated films.

Although the percentages are higher than we were expecting, the result agrees with our original hypothesis. From a theoretical perspective these findings mean that film producers are irrational. Under theorectical assumptions each market (R, PG-13, PG, and G) should equalize in revenues. If any one market is more profitable than another than producers would flood that market until profits normalize. This should especially be true since entry into each market can be assumed to have the same costs. Despite these results R rated movies are produced almost 2:1 to PG-13 rated films and 4:1 to PG rated films.