Blake Smith and Robert Reynolds, Digital Humanities

Chinese in its written form, whether typed or penned, does not separate its characters by spaces. Imagine if this were the case with English, and a sign for a job fair were to display “opportunityisnowhere.” Regardless of the intent being to announce that “opportunity is now here,” the ambiguity caused by the lack of spacing also enables a negative reading. Figuring out where the spaces, or word boundaries, belong in Chinese can even be tricky on occasion even for native speakers. Imagine then how difficult this task is for computers. And so, machines need to be able to decipher word boundaries in Chinese text before they can do anything else with it such as translation or web-search. Computational tools that do this essential preprocessing are called segmenters. They take spaceless Chinese text as input and output their best guess at a spaced version. (See Figure 1.)

The approach segmenters take varies in deciphering word boundaries and inserting spaces. They can draw on hard-coded linguistically motivated rules (such as inserting a space after every possessive particle), or, they can compute statistical probabilities for every character sequence based on examples in training data. While there do exist ways of combining rule- and statistical-based methods as well as integrating part-of-speech labels, the simple answer is that statistical segmenters produce the best results.

Figure 1. Segmenter Input/Output.

Statistical word segmenters work, as mentioned, because they ‘learn’ from training data. These training data are texts of Chinese which have spaces manually inserted by hand through the painstaking efforts of native speakers. The computer calculates the probabilities it will use to segment based on these human-quality segmentations. Human-quality, however does not mean perfect quality and as a result there is a high likelihood for variation in the segmentations of hand-segmented text. This means that for one sequence of characters, take “ABC” for example, the same characters could appear in the human-segmented text 30 times as “AB C” and 10 times as “A BC”. Due to the ambiguity present in spaceless Chinese, this inconsistency could be due to inherent ambiguity in the language, disagreement between human annotators, or even human error. This variation in training texts has been shown to be detrimental to the performance of the segmenters trained on them (Sun et al. 2005).

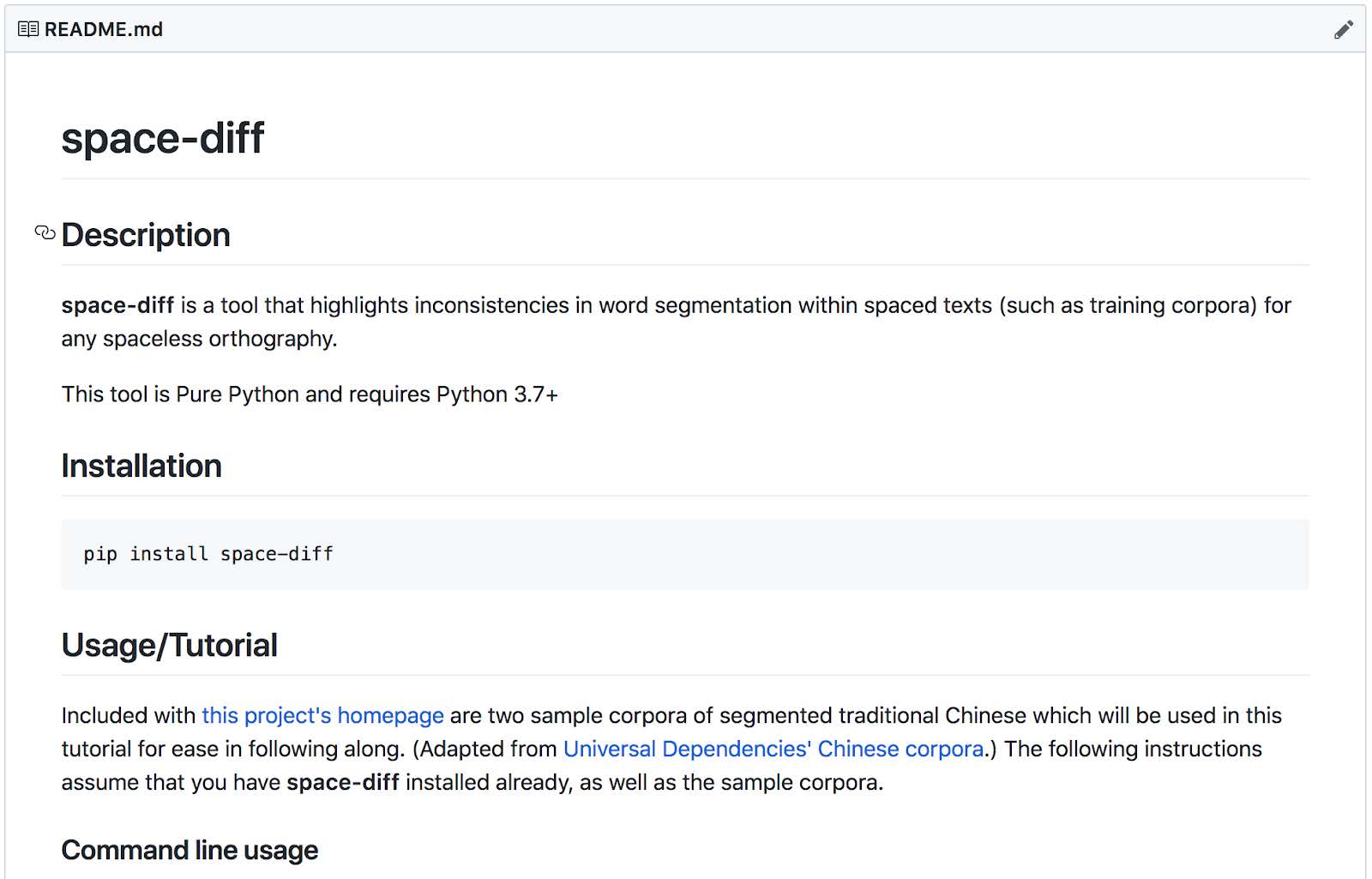

Now, we know that automatic segmentation must come before any other processing when it comes to Chinese and computers. One of those downstream processes is automatic machine translation (think Google Translate). For downstream machine translation, both accuracy and consistency in segmentation are important to the quality of the final translation output. But, Chang et al. (2008) have demonstrated that in the case of translation, consistency is more important than accuracy. So how do we ensure that segmenters are making consistent segmentations? Our solution is to ensure that the training data which informs those statistical segmenters is consistent itself. Our research culminated with the creation of a novel computational tool which allows you to check the consistency of hand-segmented training data. We have called the tool space-diff because it shows differences in spacing and enables you to fix inconsistencies before using the data to train a segmenter. As illustrated in Figure 2, the tool is freely available and open-source so that anyone in the world can use, adapt, and altogether benefit from what we have done.

Figure 2. The homepage for the tool created through this research. https://github.com/smithnlp/space-diff

The implications for this work lie not only in machine translation as an application of consistency-enhanced training data, but in other downstream processes. These include the ability to search the web in Chinese and even tag words for parts of speech. I plan to continue updating and maintaining the tool as a personal endeavor.

Overall, this experience working one-on-one with a faculty member has exposed me to the workings of my desired field, computational linguistics. What’s more, I was able to gain hands-on experience in such a way that would have been impossible through any course. This research experience has been pivotal in my graduate applications and to my future career.

Chang, Pi-Chuan, Michel Galley, and Christopher D. Manning. 2008. “Optimizing Chinese Word Segmentation for Machine Translation Performance.” In Proceedings of the Third Workshop on Statistical Machine Translation – StatMT ’08, 224–32. Columbus, Ohio: Association for Computational Linguistics. https://doi.org/10.3115/1626394.1626430.

Sun, Chengjie, Chang-Ning Huang, Xiaolong Wang, and Mu Li. 2005. “Detecting Segmentation Errors in Chinese Annotated Corpus.” In Proceedings of the Fourth SIGHAN Workshop on Chinese Language Processing. Association for Computational Linguistics. http://aclweb.org/anthology/I/I05/I05-3001