Jacob Olson and Dr. Marc Killpack, Mechanical Engineering Department

Introduction:

Soft robotics are still a relatively new technology. As seen in Figure 1, they are made entirely from compliant materials that use pressurized fluid for both structure and actuation. These robots have great potential in the world of robotics. Soft robots can excel in many areas where rigid robots fall short due to their incredible compliance and low inertia. As soft robotic technologies develop, robots will be much less limited in the scope of what they can do without risk of human injury. They will be able to do tasks working more closely with humans in current industries, and be able to enter new industries.

The biggest downside of soft robotics and one reason that many applications are not feasible yet, however, is that since they do not have rigid joints, they cannot use encoders like traditional robots to accurately measure joint angles and configurations. This presents an issue because robots are useless if their joint configurations cannot be accurately predicted and controlled. Currently the main method of estimating joint configuration on soft robots is with motion capture systems; an array of carefully calibrated cameras arranged around the robot that track the movement. This severely limits the functionality of soft robots because they can only be used in rooms with carefully calibrated systems that can cost $50,000 and up. To help soft robotics reach their potential, another more robust way to estimate the joint configurations without being confined to one room or one environment must be developed. By determining and developing a better way to estimate joint configurations, soft robots will begin to have a greater impact on the world.

The goal of this project was to research and begin developing methods that can be used to estimate the joint configurations of soft robots in a more robust way.

Methodology:

Prior to beginning with the ORCA grant, we conducted research for some potential methods, including using Inertial Measurement Units (IMUs), Infrared light refraction, linear potentiometers, and tactile sensors. All of the methods that had been tested proved to have some limitations so we moved on to a different method to get better results.

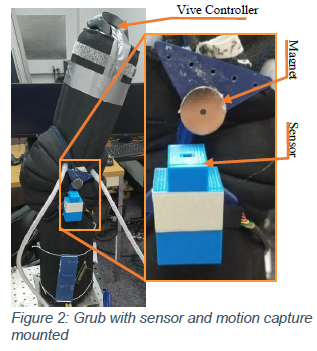

My research was to use a magnetic angle sensor to attempt to estimate the joint configurations. A magnetic angle sensor is used to detect sine and cosine waves correlated with the orientation of the magnet field emitted by a magnet. If calibrated perfectly, the arctangent of the sine and cosine signals would be the relative angle from the front of the sensor to the magnet’s north pole. We mounted the sensor and magnet to a simple single-joint arm referred to as the Grub as shown in Figure 2 to begin the testing. One of the major difficulties is that without a rigid joint, the magnet both translated and rotated, rather that only rotating. This meant that the sensor would not be able to be calibrated in the way that was intended by the manufacturer.

As can be seen, the magnet is mounted on the upper link and the sensor is mounted to the lower link causing the magnet to rotate with the joint. In order to determine the true angle of the joint, we used the HTC Vive virtual reality hardware as a motion capture system. The controller can be seen at the top of the left picture in figure 2.

Results:

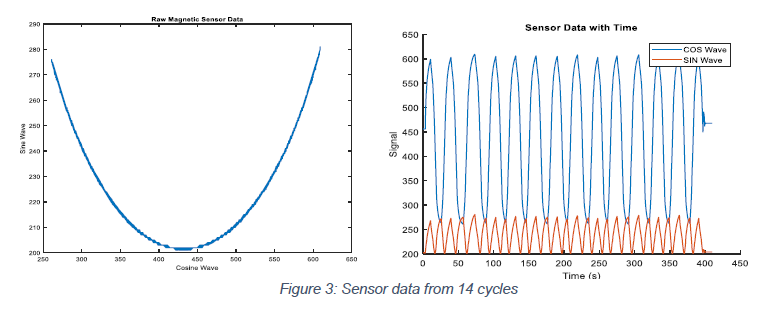

Once we rigidly mounted the sensor and magnet to the arm, we were able to get very clean data from the sensor. The data was very consistent over several cycles and there was very little, if any, hysteresis. Figure 3 below shows the preliminary data from the sensor as the joint was rotated from positive 90 degrees to negative 90 degrees and back approximately 14 times.

The plot on the left shows the data from each cycle overlaid on top of each other, showing the consistency and lack of hysteresis in the sensor. The plot on the right shows the sine and cosine waves over time, showing the 14 cycles.

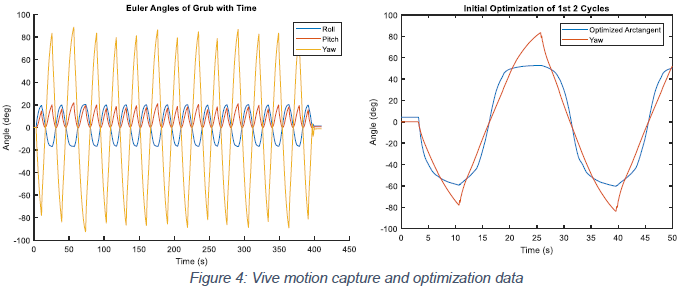

Figure 4 below shows the data received from the Vive motion capture system, showing the Euler angles of the controller fixed to the end of the upper joint. As can be seen, most of the motion is in the yaw angle as it cycles from approximately positive to negative 90 degrees. On the right is the plot of the initial attempt at optimization showing that due to the translation and rotation movement of the magnet, the intended calibration of the sensor will not work, showing the need for further optimization and/or machine learning.

Discussion and Conclusion:

The initial data that we have received shows a lot of promise to be able to accurately determine joint orientation, we have begun some optimization and machine learning techniques to correlate the sensor data to the motion capture data. Once the data is correlated, we will test to see how accurately the sensor can predict the orientation of the single joint and then we will move on to another soft robot arm with several joints to see how feasible this method will be. By solving this issue of accurately predicting joint orientation without the use of a motion capture system, the soft robotics industry will be able to progress and begin expanding the possibilities of robots.